Julia has support for sparse vectors and sparse matrices in the SparseArrays stdlib module. Instrumenting Julia with DTrace, and bpftrace.Reporting and analyzing crashes (segfaults).Fixing precompilation hangs due to open tasks or IO.Static analyzer annotations for GC correctness in C code.Proper maintenance and care of multi-threading locks.printf() and stdio in the Julia runtime.Talking to the compiler (the :meta mechanism).High-level Overview of the Native-Code Generation Process.Correspondence of dense and sparse methods.Compressed Sparse Column (CSC) Sparse Matrix Storage.Noteworthy Differences from other Languages.Multi-processing and Distributed Computing.Mathematical Operations and Elementary Functions.That is, the original matrix lists only the words actually in each document, whereas we might be interested in all words related to each document-generally a much larger set due to synonymy. The original term-document matrix is presumed overly sparse relative to the "true" term-document matrix.From this point of view, the approximated matrix is interpreted as a de-noisified matrix (a better matrix than the original). The original term-document matrix is presumed noisy: for example, anecdotal instances of terms are to be eliminated.The original term-document matrix is presumed too large for the computing resources in this case, the approximated low rank matrix is interpreted as an approximation (a "least and necessary evil").There could be various reasons for these approximations: This matrix is also common to standard semantic models, though it is not necessarily explicitly expressed as a matrix, since the mathematical properties of matrices are not always used.Īfter the construction of the occurrence matrix, LSA finds a low-rank approximation to the term-document matrix. A typical example of the weighting of the elements of the matrix is tf-idf (term frequency–inverse document frequency): the weight of an element of the matrix is proportional to the number of times the terms appear in each document, where rare terms are upweighted to reflect their relative importance. LSA can use a document-term matrix which describes the occurrences of terms in documents it is a sparse matrix whose rows correspond to terms and whose columns correspond to documents. The resulting patterns are used to detect latent components. LSA groups both documents that contain similar words, as well as words that occur in a similar set of documents. by tf-idf), dark cells indicate high weights. A cell stores the weighting of a word in a document (e.g. Every column corresponds to a document, every row to a word. Overview Animation of the topic detection process in a document-word matrix. In the context of its application to information retrieval, it is sometimes called latent semantic indexing ( LSI). Īn information retrieval technique using latent semantic structure was patented in 1988 ( US Patent 4,839,853, now expired) by Scott Deerwester, Susan Dumais, George Furnas, Richard Harshman, Thomas Landauer, Karen Lochbaum and Lynn Streeter. Values close to 1 represent very similar documents while values close to 0 represent very dissimilar documents. Documents are then compared by cosine similarity between any two columns.

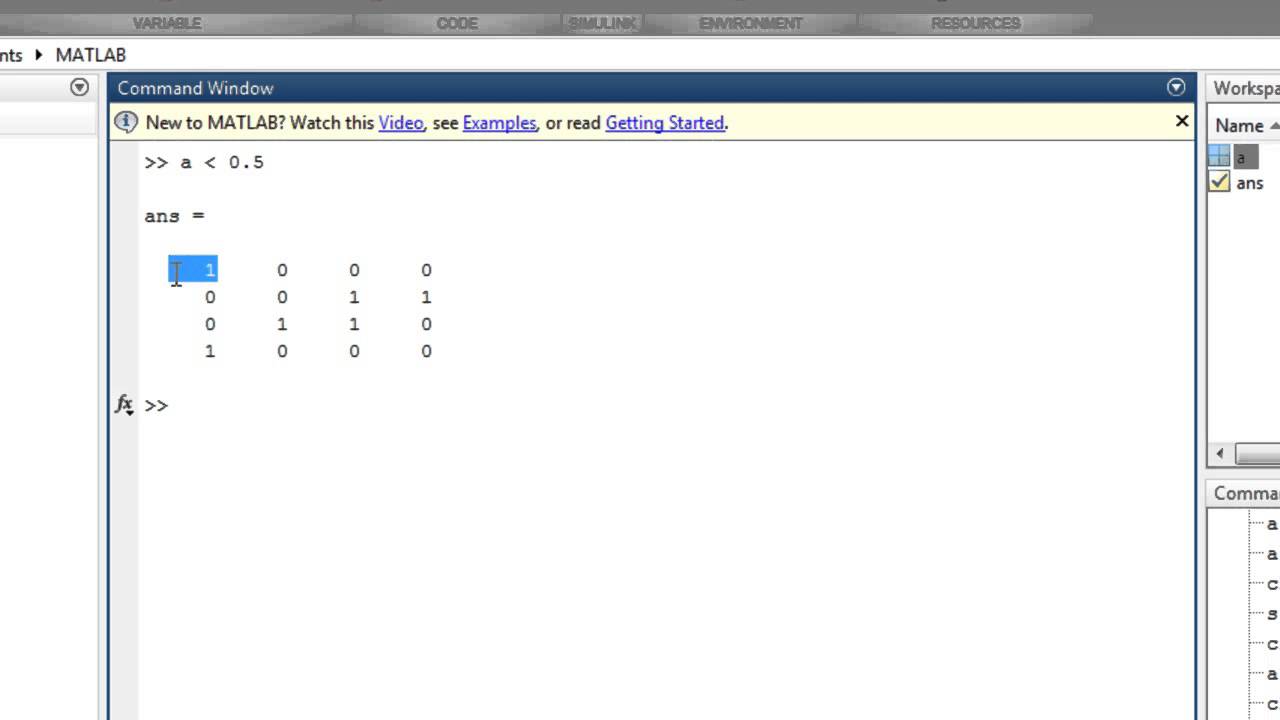

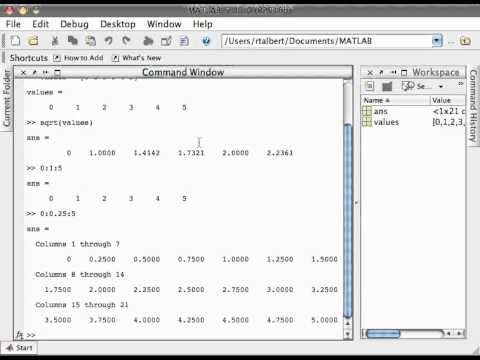

A matrix containing word counts per document (rows represent unique words and columns represent each document) is constructed from a large piece of text and a mathematical technique called singular value decomposition (SVD) is used to reduce the number of rows while preserving the similarity structure among columns.

LSA assumes that words that are close in meaning will occur in similar pieces of text (the distributional hypothesis). Latent semantic analysis ( LSA) is a technique in natural language processing, in particular distributional semantics, of analyzing relationships between a set of documents and the terms they contain by producing a set of concepts related to the documents and terms.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed